Finding a compelling value proposition when entering a new use case for your product or expanding to a new niche can be tricky. Of course, you can trust your intuition and make a guess, but in case it’s a miss, you’ll waste time.

Instead of making blind guesses, it’s wiser to make decisions backed by data. The simplest way to find out what value proposition speaks best to the new segment of prospects you’re targeting is to A/B test different approaches. That’s what we did.

We wanted to reach out to a new segment of prospects from a niche we haven’t targeted before to show them how Woodpecker can be used in their daily work. We narrowed down our value proposition ideas to two and A/B tested which one will resonate better. We did that in three steps.

Step #1 We found prospects that match the ICP

We started by defining our ICP for this particular case. We approached it using the reversed engineering method, so to speak.

We took a look at our current user base to find clients who already used Woodpecker in their customer success processes. Based on this data we were able to draw a picture of what persona we should target and what value proposition to highlight in our email copy.

With the ICP in mind, we started looking for prospects who fit the criteria: customer success managers and team leaders from medium-sized SaaS companies. We narrowed the targeting further down to customer success managers and team leaders from medium-sized SaaS companies who recently changed their job. Why so? We thought they would be more interested in trying Woodpecker as a solution to the challenges in their new role. It was an assumption we wanted to test.

Step #2 We prepared two email versions for A/B testing

We learned from our research that customer success teams use Woodpecker in two major cases: for onboarding new clients and for customer retention. Given that, we decided to test two value propositions.

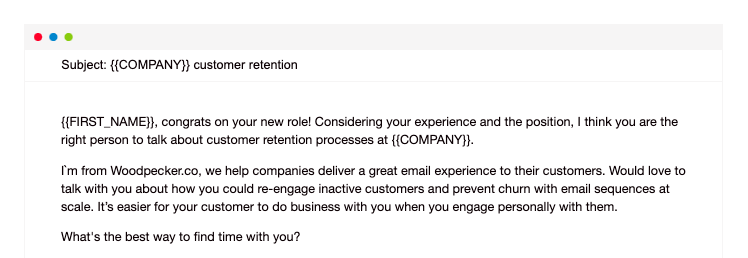

Version A took aim at churn prevention:

We stressed the importance of maintaining personal contact with customers to keep them engaged, give them a helping hand if necessary, and ensure they have great user experience.

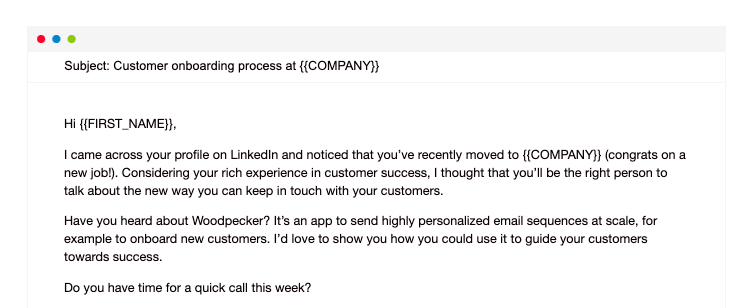

Version B focused on creating personalized user onboarding email sequences to introduce users to a new tool, highlight the value, and guide them on how to achieve the best results:

The subject lines of the two versions also reflect the difference in value propositions. It’s crucial to keep the subject line in the same context as the rest of the message. You can read more about it here: How to Write a Subject Line That Will Make Prospects Open a Cold Email? >>

The CTA remains pretty much the same in both versions: the goal of this campaign was to schedule a demo call.

We also scheduled three personalized follow-ups to be sent to those who, for some reason, missed the first email.

If you’d like to learn more about how to do A/B tests in Woodpecker, check this blog post: Step-by-step Guide to A/B Testing Cold Emails and Follow-ups in Woodpecker >>

Step #3 We analyzed the stats and draw conclusions

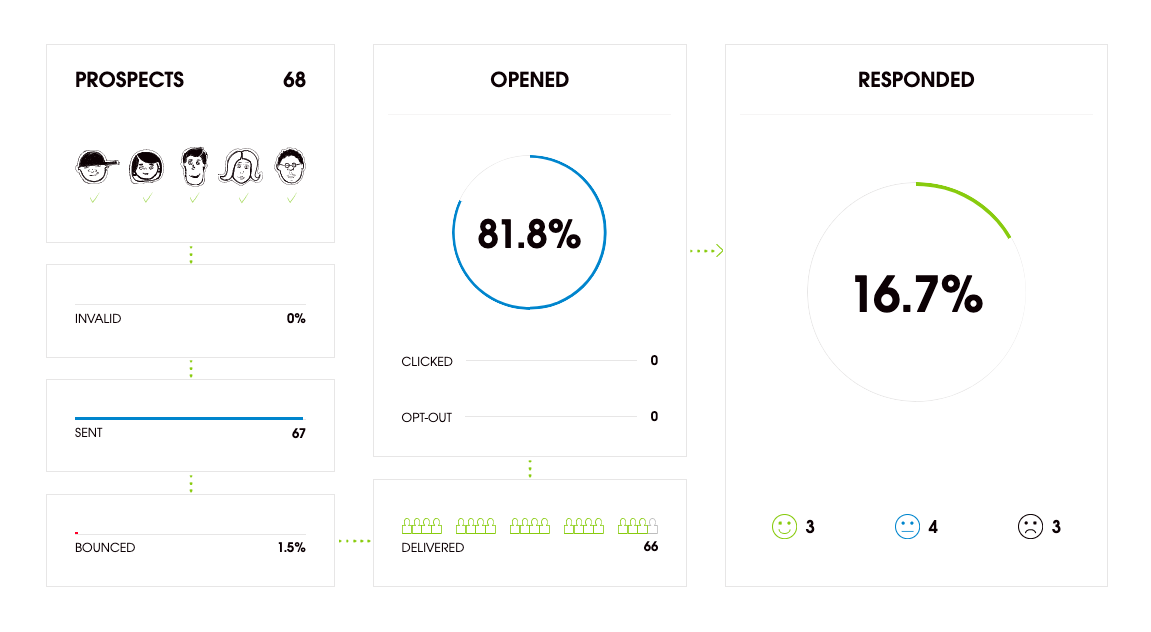

The campaign was sent to 68 prospects. We tend to keep our campaigns lean because it allows for more precise targeting (here’s more about it). It also gives room to more advanced personalization, which we believe is key to succeed in cold emailing and translates to better email deliverability.

Here are the campaign’s stats as a total:

The total open rate is a very good result – it’s a sign that both subject line versions hit the right note.

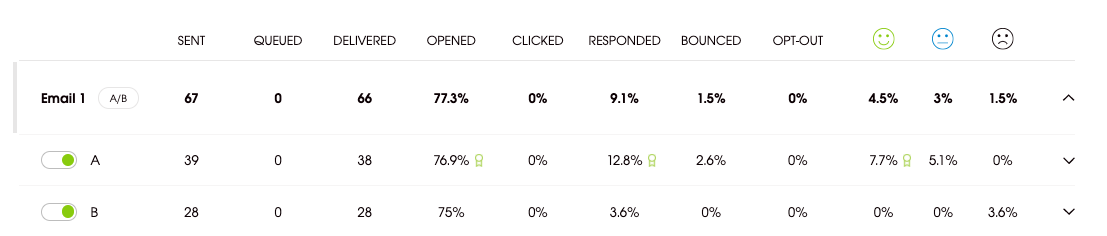

Now let’s have a look at each email version’s performance:

While there’s no big difference between the open rates, the stats clearly show that version A generated more responses (12.8%) in comparison to version B (3.6%). What is more, 7.7% of responses to version A of the email were positive.

By looking at this data, we can conclude that the value proposition focused on customer retention most likely addresses a more relevant issue and a bigger pain point for this group of prospects. It’s valuable guidance on what direction our future campaigns can take and what we should put more focus on.

This is not the end

Does it mean that from now on we’ll send only version A of the email? Nope. The door to further optimization is wide open. We’ll start working on boosting the reply rate first and then experiment with different approaches to presenting the winning value proposition. Cold emailing is a game of trial and error – there’s always some room for testing.